Dark patterns are increasingly on the enforcement agenda for regulators across the world, summed up by a recent US Federal Trade Commission (FTC) report:

“While dark patterns may manipulate consumers in stealth, these practices are squarely on the FTC’s radar.”

The ACCC itself has recently made recommendations for a suite of reforms to address the use of dark patterns. The FTC report makes a good side-by-side read as it sets out real world examples, including enforcement action the FTC has taken, and annexes a comprehensive catalog of types of dark patterns.

Escalating use of dark patterns

Using and capitalising on human psychology to encourage consumers to engage in certain behaviour is nothing new. It’s what the marketing and advertising industry has been doing for a very long time. But intuitively we all know why these practices are much worse online because, as the FTC said:

“The pervasive nature of data collection techniques, which allow companies to gather massive amounts of information about consumers’ identities and online behavior, enables businesses to adapt and leverage advertisements to target a particular demographic or even a particular consumer’s interests…..By contrast, consider the practical difficulties of incessantly rearranging the aisles of a grocery store that places sugary cereals at toddler eye-level and candy bars at the register to do the same.”

The FTC considered that more and more companies are using dark patterns precisely because they are so effective:

- one study discussed at the workshop found that dark patterns doubled the percentage of consumers who signed up for a dubious identity theft protection service, as compared to consumers who were presented with a neutral interface;

- dark patterns often are not used in isolation and tend to have even stronger effects when they are combined; and

- dark patterns also raise special enforcement challenges for the very reason that because dark patterns are covert or otherwise deceptive, many consumers don’t realize they are being manipulated or misled.

There also seem to be differences in consumer risk between technologies. The FTC pointed to studies which show that some dark patterns are more common in mobile apps than on websites: some design techniques are more effective on smaller screens than on larger ones and providers are better able to hide important information from consumers on their mobile devices because the amount of scrolling required makes it unlikely that people will see it.

Inducing false beliefs

Classic examples include:

- Claims that an item is almost sold out when there is ample supply.

- False claims that other people are also looking at or have recently purchased the same product.

- Advertisements being designed to look like independent, editorial content.

The FTC’s action against a loan comparison site, LendEDU, alleged that while tables of loan rankings were silent on how they were ranked, the mere fact of ranking created the false impression that the loans were ranked based on favourability for the customer Whereas the raking was based on how much the third party providers paid LendEDU to be ranked.

The FTC cautioned that “companies are on the hook for the net impression conveyed by the various design elements of their websites, not just the veracity of certain words in isolation”. A common advertising style is to create a news item featuring a product. The FTC said that if an advertisement strongly resembles editorial content such as a news article, or appears formatted as native content in a publication with a strong journalistic brand, it is unlikely disclaimers will overcome the deceptive net impression.

Hiding or delaying important information

Some dark patterns hide or obscure important information from consumers, for example:

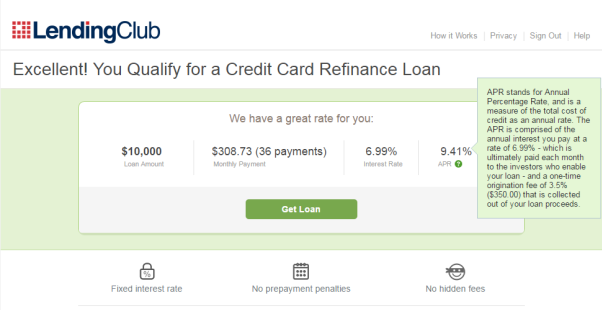

- Hiding key limitations of the product or service in dense terms & conditions that consumers don’t see or read. The FTC gave the below example of explanations of fees in tooltip or ‘hover-over’ buttons, which the FTC said most consumers were unlikely to click during an application process.

- Burying junk fees or using "drip pricing" where businesses advertise only part of a product's total price to lure in consumers, and only mention other charges later. The FTC pointed to a study which found that users who weren’t shown the ticket fees upfront ended up spending about 20% more money and were 14% more likely to complete.

As dark patterns particularly impact disadvantaged and vulnerable groups, companies need to be alive to how design techniques will impact the range of users. For example, if a business markets a product to older adults, the FTC said it should avoid design elements that are harder for older consumers to perceive, such as putting important information at the periphery of the screen or in a light colour.

Unauthorised charges

Another common dark pattern involves tricking someone into paying for goods or services without consent. For example:

- Deceptive subscription sellers may saddle consumers with recurring payments for products and services they never intended to purchase or that they do not wish to continue purchasing. The FTC has shown particular concern over in-app charges in apps aimed at children, and has previously tackled in-app charges in actions against Apple, Google and Amazon.

- Where a company deceptively offers a free trial period, but then follows it up with a recurring subscription charge without the consumer’s knowledge if they forget to cancel.

The FTC identified the design technique of ‘sludge’: a high friction experience that, by its nature, causes people to become fatigued and give up. The FTC gave the example of an operator of a children’s online learning site, ABCMouse, which offered 30-day free trials with automatic rollover into 6-or 12-month memberships, promising “Easy Cancellation.” However, ABCMouse required consumers to navigate between six and nine screens to cancel their memberships, consumers could not skip ahead or cancel without visiting each screen, and each screen included multiple links and buttons that, if pressed, would take consumers out of the cancellation path altogether.

In another case pursued by the FTC, children could download for free an Amazon game. A child user may be prompted to use or acquire seemingly fictitious currency, including a ‘boatload of doughnuts, a can of stars, and bars of gold,’ but in reality the child is making an in-app purchase using real money. Amazon later added a password prompt for account holders only for in-app purchases of $20 or more, but that prompt failed to disclose that authorizing a single purchase also authorized unlimited purchases for the next 60 minutes. Ultimately, Amazon was forced to make more than $70 million in refunds available to consumers.

Obscuring or subverting privacy choices

Another pervasive dark pattern involves design elements that obscure or subvert consumers’ privacy choices. These dark patterns are often presented as giving consumers choices about privacy settings or sharing data but are actually designed to steer consumers toward the option that gives away the most personal information. For example, a user interface that highlights the company’s preferred choice to accept cookies, while greying out the option to deny or modify cookies.

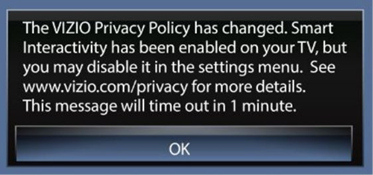

The FTC said that companies should make consumer choices easy to access and understand. It gave the example of FTC enforcement proceedings against a, a smart-TV manufacturer, Vizio. It enabled a default setting on its TVs called “Smart Interactivity,” which enabled consumers to receive “program offers and suggestions,” but in reality allowed Vizio to comprehensively collect and share consumers’ television viewing activity with third parties. At a certain point, it provided the below notice to some consumers:

The FTC alleged that Vizio effectively removed consumers’ ability to make an informed choice about their data sharing because the notice timed out after one minute, it provided no direct link to the settings menu or privacy policy, and the setting name was too vague.

Toggle settings can be unclear: for example, a “Do Not Sell My Information” option followed by an “off” toggle creates a double negative and might make it unclear whether consumers need to toggle the setting on or off to prohibit the sale of their information.

The FTC also said that, even if offering opt-out privacy buttons, mobile apps may still by default collect mobile numbers. Because consumers tend not to change their mobile numbers (with number portability), app providers can use the mobile number to target advertising.

The FTC said that “businesses should, first and foremost, aspire to become good stewards of consumer personal information.”

Dark patterns impact on competition and consumers

The FTC’s report also warns that dark practices may undermine fair competition by raising barriers to entry and disadvantaging companies that refuse to engage in such practices.

The FTC isn’t alone in taking this view. In its recently released Digital Platforms Services Inquiry fifth interim report, the ACCC makes recommendations that could tackle dark patterns, including:

- A prohibition on unfair trade practices to capture harmful dark patterns that fall outside of existing consumer law protections.

- Additional competition measures to address conduct by digital platforms with market power that frustrates consumer switching, including through dark patterns. The

ACCC suggests implementing these measures through service-specific codes, which impose targeted obligations such as prohibiting search services, mobile OS services or app store services from restricting a consumers' ability to change defaults and switch to alternative services.

Clearly, the regulators don’t just want to shine a light on dark patterns. They are now taking steps to prevent them and reduce their impact on consumers, including by bringing in new regulations. Businesses would be wise to make sure their websites and apps are designed to be clear and upfront, without any dark patterns.

Authors: Stephanie Dixon, Marina Yang, Jasleen Kaur and Peter Waters

Read More: Bringing Dark Patterns to Light

KNOWLEDGE ARTICLES YOU MAY BE INTERESTED IN:

Visit Smart Counsel